Tensorflow And Automatic Differentiation - Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation.

Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of.

Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation.

Regression with Automatic Differentiation in TensorFlow Coursya

Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in.

Softwarebased Automatic Differentiation is Flawed Paper and Code

Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented.

Online Course Regression with Automatic Differentiation in TensorFlow

In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate.

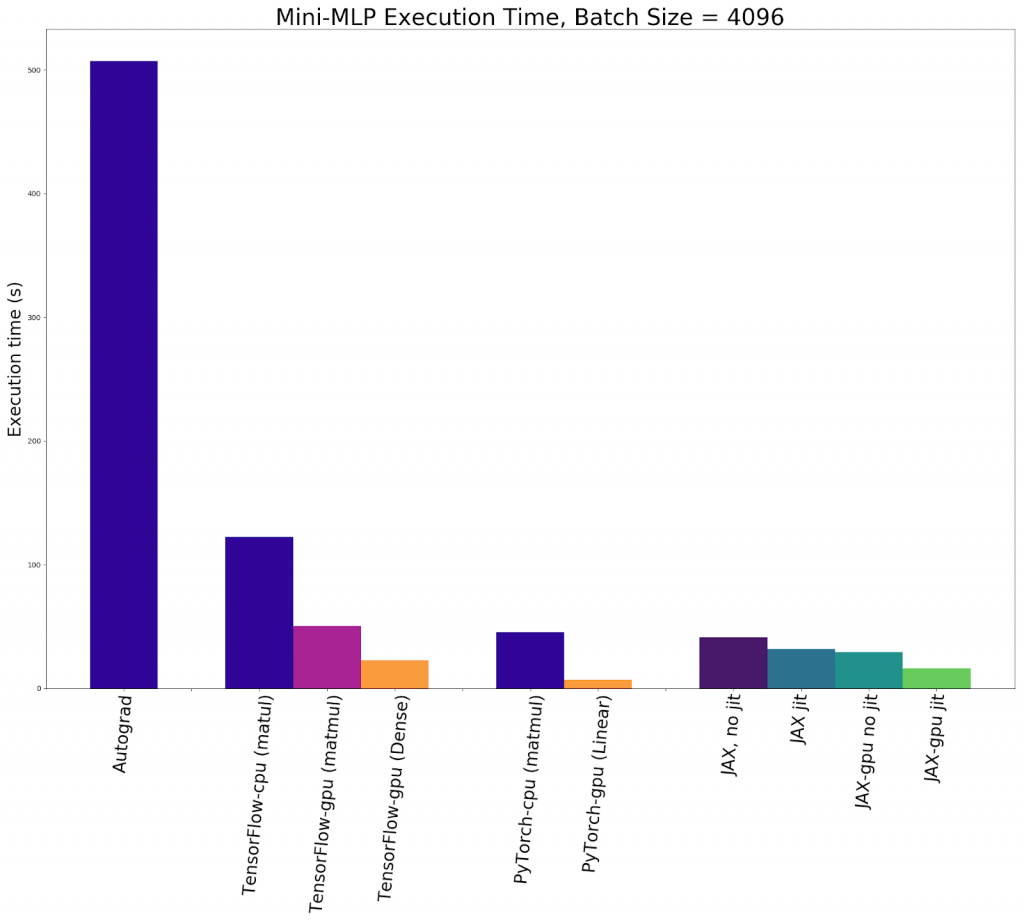

Accelerated Automatic Differentiation With JAX How Does It Stack Up

Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented.

Softwarebased Automatic Differentiation is Flawed Paper and Code

Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented.

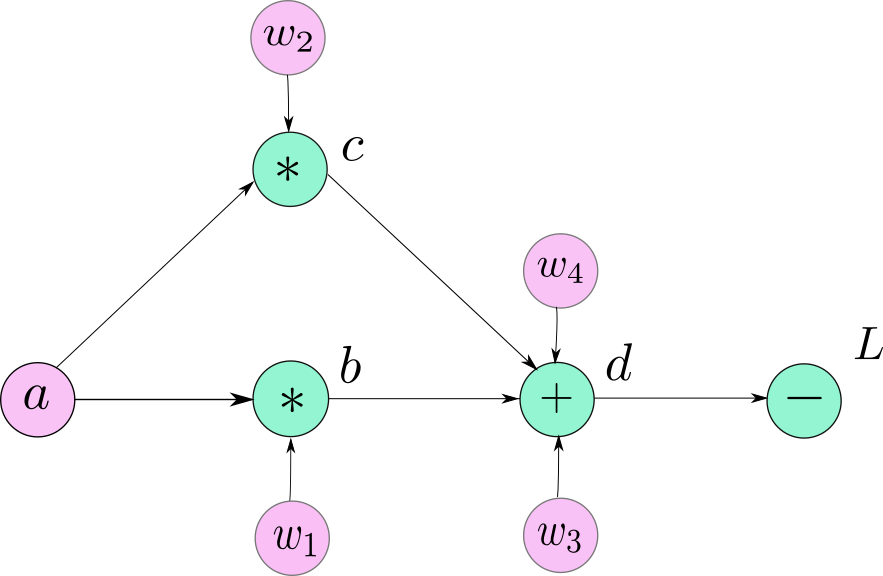

Understanding Graphs, Automatic Differentiation and Autograd BLOCKGENI

In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in.

TensorFlow Automatic Differentiation (AutoDiff) by Jonathan Hui Medium

Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate.

Automatic Differentiation in Pytorch DocsLib

Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented.

TensorFlow Automatic Differentiation (AutoDiff) by Jonathan Hui Medium

Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented.

GitHub Pikachu0405/RegressionwithAutomaticDifferentiationin

Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. Automatic differentiation is useful for implementing machine learning algorithms such as backpropagation for training neural. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented.

Automatic Differentiation Is Useful For Implementing Machine Learning Algorithms Such As Backpropagation For Training Neural.

Automatic differentiation (ad) is an essential technique for optimizing complex algorithms, especially in the context of. In this article, we will explore how tensorflow’s gradienttape works and how it can be implemented for automatic differentiation. Tensorflow's automatic differentiation (ad) feature enables you to automatically calculate the gradients of.